In our evaluation we aimed at answering to the following research questions:

- RQ1 — Effectiveness: How effective is MT at detecting failures in VLA-enabled robots?

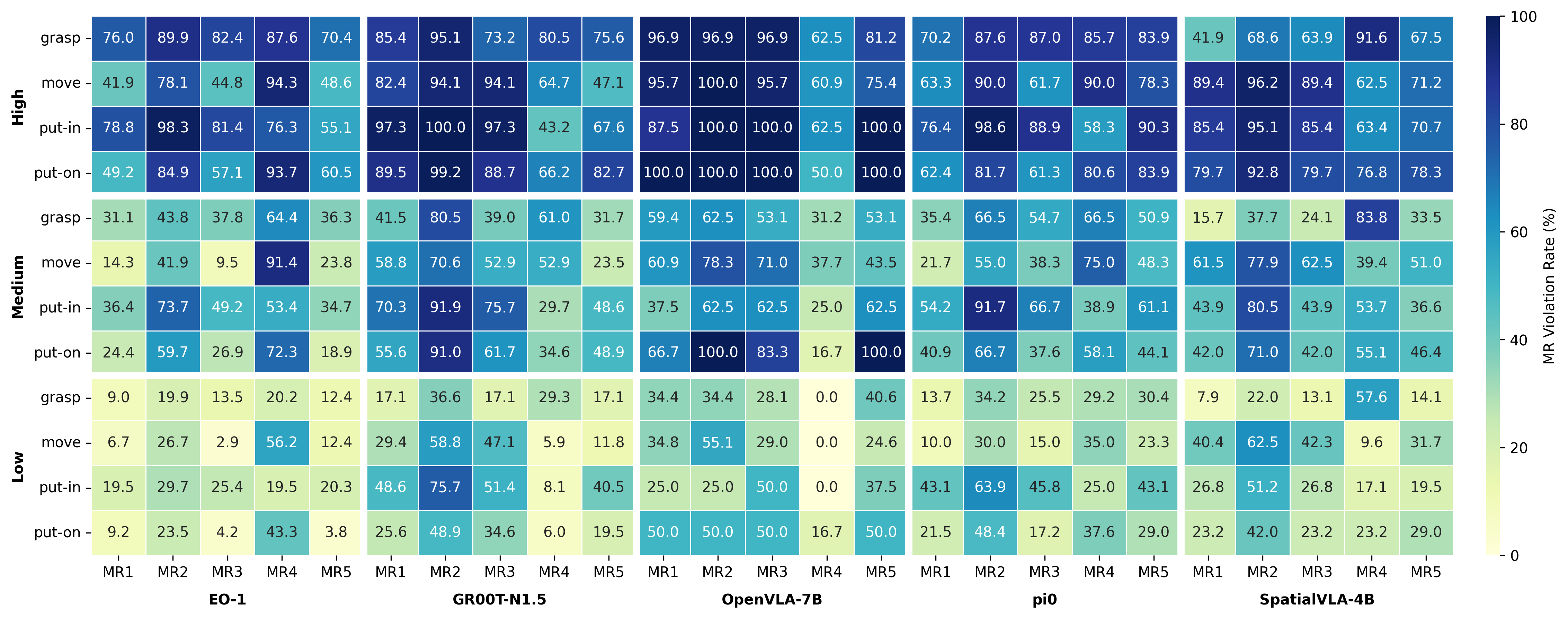

- RQ2 — Sensitivity: How do different MRs differ in their ability to detect failures?

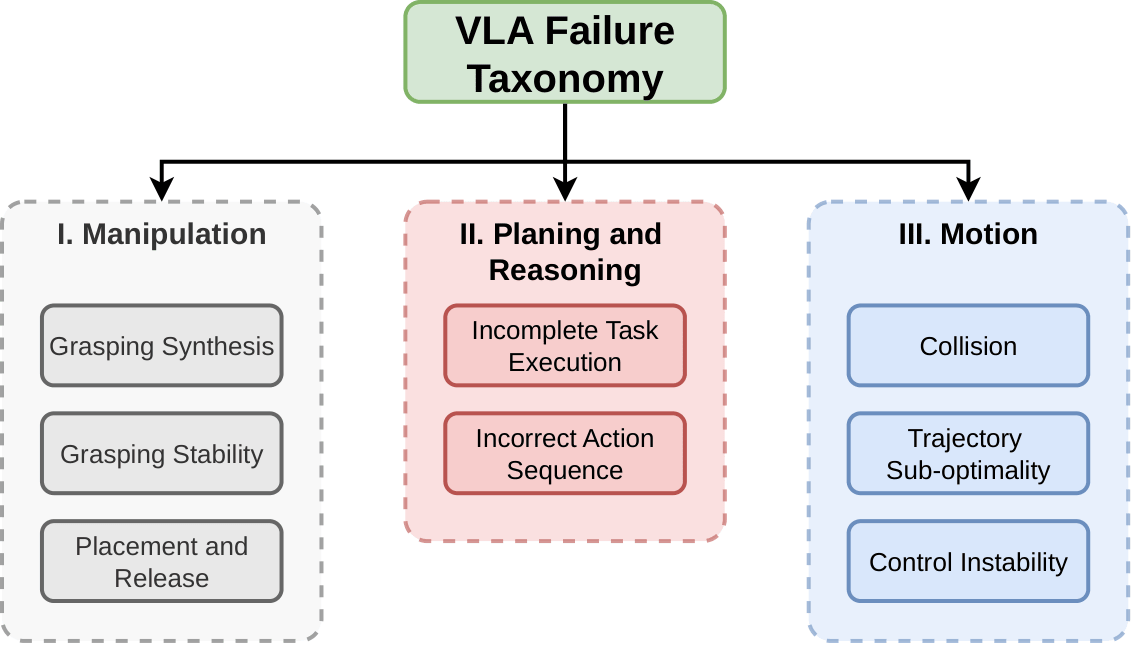

- RQ3 — Taxonomy: What types of failures does MT reveal?